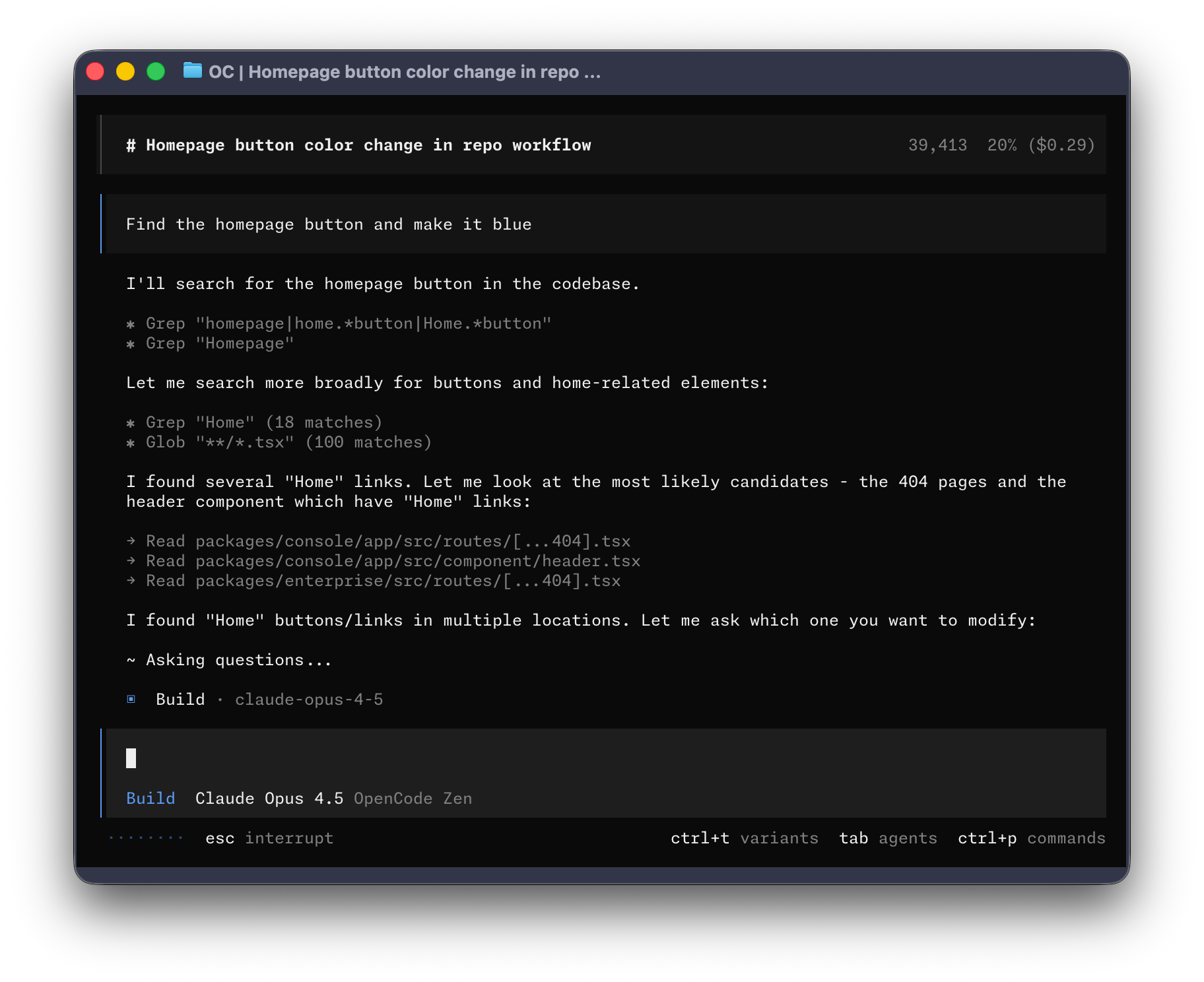

How openclaw/openclaw Works

OpenClaw is a local-first AI assistant framework, positioning itself as the open-source, power-user alternative to closed, cloud-based assistants like ChatGPT or Google Assistant. Its core competitive advantages are privacy (runs on user's own devices), omni-channel integration (connects to WhatsApp, Slack, iMessage, etc.), and extreme extensibility through a robust plugin and skills architecture. While commercial assistants prioritize ease of use for the mass market, OpenClaw targets developers and tech-savvy users who demand granular control, deep customization, and the ability to integrate the assistant into their personal workflows and hardware ecosystem.

Overview

OpenClaw is a local-first AI assistant framework, positioning itself as the open-source, power-user alternative to closed, cloud-based assistants like ChatGPT or Google Assistant. Its core competitive advantages are privacy (runs on user's own devices), omni-channel integration (connects to WhatsApp, Slack, iMessage, etc.), and extreme extensibility through a robust plugin and skills architecture. While commercial assistants prioritize ease of use for the mass market, OpenClaw targets developers and tech-savvy users who demand granular control, deep customization, and the ability to integrate the assistant into their personal workflows and hardware ecosystem.

To provide a powerful, self-hosted, and privacy-focused personal AI assistant that integrates seamlessly into users' existing messaging platforms and devices, offering deep customization and extensibility.

OpenClaw is a credible local-first personal assistant platform with unusually broad channel reach, companion apps, and a real extensibility ecosystem, indicating substantial engineering depth. It is best suited for power users or small teams who want a privacy-oriented assistant that lives on their own devices and can act across existing communication surfaces. The main decision risks are security hardening around outbound web access and remote exposure, plus extension compatibility and operational reliability details that are not yet enterprise-smooth. Pursue it if your strategy values local-first personal automation and you can dedicate ownership for configuration, security posture, and controlled upgrades.

Adopt only with a defined security baseline: lock down gateway exposure, restrict outbound web access, and standardize a pinned set of plugins with a tested upgrade path.

How It Works: End-to-End Flows

Interacting with the Assistant via a Messaging App

This is the primary user journey where a user interacts with their assistant from an everyday communication tool like WhatsApp or LINE. The flow begins with the user sending a message, which is then securely routed to the local OpenClaw Gateway. The Gateway first authenticates the user based on pre-configured access policies. If the user is authorized, their message is passed to the AI agent runtime, which processes the request, potentially using tools or its long-term memory. The agent's response is then streamed back through the Gateway and delivered as a reply on the original messaging platform. This entire loop is designed to feel as natural and responsive as chatting with a person, while enforcing strict security and privacy controls behind the scenes.

- User sends a message from a connected channel (e.g., LINE, WhatsApp)

- The channel connector receives the message and performs an access control check

- If unauthorized, the system initiates the user pairing flow and sends a pairing code

- If authorized, the message is passed to the Agent Intelligence core

- The agent runtime optionally repairs the conversation history and invokes the AI model

- The assistant's reply is sent back through the outbound delivery pipeline, with chunking and formatting

Agent uses a Physical Device to Complete a Task

This flow demonstrates OpenClaw's ability to bridge the digital and physical worlds. A user asks the assistant to perform a task that requires hardware access, such as 'take a photo and describe what you see'. The AI agent, running on the central Gateway, understands the request requires a camera. It then uses the device capability protocol to command a paired companion app (e.g., on the user's iPhone) to activate its camera. The phone takes the picture and sends the image data back to the agent. The agent then analyzes the image (likely with a multi-modal AI model) and formulates a text description, which is sent back to the user in chat. This flow showcases the seamless orchestration between the central AI brain and its distributed 'limbs' on various devices.

- User asks the assistant a question requiring hardware access (e.g., 'Take a picture')

- The companion app (e.g., on iOS/Android) discovers and maintains a connection to the Gateway

- The Agent runtime determines the need for a hardware-specific tool (e.g., `camera.snap`)

- The Gateway sends a command to the companion app via the device capability protocol

- The companion app executes the native function (e.g., activates the camera) and returns the result (e.g., image data)

- The agent receives the tool result and uses it to generate the final response to the user

Operator Manages the Assistant via the Control UI

This flow describes how an operator (the user who owns the assistant) manages their OpenClaw instance. The operator opens a web browser and navigates to the local Control UI. The UI establishes a secure WebSocket connection to the Gateway, using device-specific credentials if available. Once connected, the operator has a real-time dashboard showing system status, logs, and active conversations. They can perform administrative tasks like inspecting a chat session's history, changing a configuration setting (like the AI model being used), or approving a new user who has requested access via a pairing code. This provides a powerful, centralized point of control for all aspects of the assistant's operation.

- Operator opens the Control UI in their browser

- The UI discovers and establishes a secure WebSocket connection to the local Gateway

- The UI receives a stream of real-time events and detects any missed events

- Operator views the list of active sessions and selects one to inspect the chat history

- Operator navigates to the configuration screen and changes a setting, triggering a Gateway action

Developer Extends the Assistant with a New Plugin

This flow outlines how a developer can add new functionality to OpenClaw. The developer creates a new plugin, which is a directory containing a manifest file (`openclaw.plugin.json`) and some TypeScript code. In the manifest, they declare the capabilities their plugin provides, such as a new channel connector for a niche messaging app. In the code, they use the Plugin API to register their channel's logic. Once the plugin is placed in the workspace's `plugins` folder, the OpenClaw Gateway automatically discovers it on the next startup. The loader validates the plugin's configuration and registers its capabilities, making the new channel immediately available for configuration and use within the assistant. This demonstrates the modular and hot-pluggable nature of the OpenClaw ecosystem.

- Developer creates a plugin directory with a manifest file declaring its capabilities

- Developer uses the Plugin API in their code to register a new channel, tool, or service

- Developer places the plugin in the workspace's 'plugins' directory

- On restart, the OpenClaw Gateway's plugin loader discovers, validates, and registers the new plugin

- The new capability (e.g., channel) is now available for use and configuration in the system

Key Features

Gateway Control Plane

The Gateway is the central nervous system of OpenClaw. It serves as a single, local-first control plane that manages all connections, sessions, configurations, and events. This module's design focuses on security and operational clarity, authenticating all clients (CLIs, UIs, device nodes) and providing a structured WebSocket protocol for communication. It includes built-in security audits to prevent unsafe configurations, ensuring that even as a powerful local tool, it doesn't accidentally expose itself to risks.

- Multi-Factor Connection Authentication — 【User Value】 Ensures that only authorized clients can connect to and control the assistant, protecting it from unauthorized access, especially when exposed beyond the local machine. 【Design Strategy】 Implement a flexible, layered authentication system that prioritizes secure, modern methods (like identity-based) while providing fallbacks to traditional credentials, all resolved automatically from configuration and environment. 【Business Logic】 - Step 1: Resolve credentials. The system first looks for passwords, then tokens, in configuration files and environment variables, including legacy names for backward compatibility. - Step 2: Determine authentication mode. The mode defaults to 'password' if a password is found, otherwise it defaults to 'token'. - Step 3: Check for Tailscale identity. If enabled and the connection is from within a Tailscale network, the system attempts to verify the user's identity via Tailscale's APIs. It checks proxy headers and confirms the user login via a 'whois' lookup. This provides a passwordless authentication experience within the user's private network. - Step 4: Fallback to credential check. If Tailscale identity verification is not applicable or fails, the system falls back to the configured password or token authentication. It uses a timing-safe comparison to prevent timing attacks on secrets. - Step 5: Validate configuration. The system ensures that a required credential actually exists for the chosen mode (e.g., a token must be set if in token mode), preventing a misconfigured, insecure startup. 【权衡】 The layered approach provides flexibility but adds complexity. Relying on Tailscale's identity verification introduces a dependency on their service and requires correct proxy header configuration to work securely.

- Configuration Security Auditing — 【User Value】 Proactively warns the user about potentially risky configurations, preventing accidental exposure of the assistant or the execution of high-impact tools by unauthorized parties. 【Design Strategy】 Create a programmatic audit that scans the system's configuration and reports on a scale of severity (info, warning, critical) to guide the user toward safer setups. 【Business Logic】 - Step 1: Audit network exposure. It flags public exposure (e.g., using Tailscale Funnel) as a 'critical' risk, and private network exposure (Tailscale Serve) as 'info'. - Step 2: Audit tool access policies. It checks if any high-powered tools (like those with elevated system access) have a completely open access policy (e.g., allow from anyone, denoted as '*'). This is flagged as 'critical'. It also warns if an allow-list for a tool is unusually large (more than 25 entries), suggesting it may be too permissive. - Step 3: Audit messaging channel policies. It reviews the Direct Message (DM) policy for each connected channel. A policy of 'open' (allowing anyone to DM the assistant) is flagged as a risk, with a recommendation to use 'pairing' or an explicit allow-list. - Step 4: Incorporate dynamic approvals. The audit considers not just the static configuration file, but also dynamically approved users from the 'pairing' system's database, providing a complete picture of who has access.

- First-Run Automation — 【User Value】 Allows a pre-configured workspace to perform initial setup tasks or send a welcome message automatically on first boot, streamlining the user's onboarding experience. 【Design Strategy】 On startup, the system checks for a special instruction file within the agent's workspace and executes the contents once as the main user, without requiring manual intervention. 【Business Logic】 - Step 1: Check for instructions. The gateway looks for a file named 'BOOT.md' in the current workspace directory. - Step 2: Skip if absent. If the file doesn't exist or is empty, the process is skipped. - Step 3: Construct a command. If the file exists, its content is wrapped in a special prompt instructing the agent to follow the instructions and use its tools (like the message tool) as needed. - Step 4: Execute in the main session. The command is run non-interactively within the primary user's main session. This ensures the action is performed with the correct identity and context, but the output is not delivered back to a chat channel, acting as a one-time startup script.

Agent Intelligence & Execution

This module is the 'brain' of the assistant. It manages the entire lifecycle of an agent's response, from receiving a user prompt to generating a final answer. It handles interactions with the underlying large language models (LLMs), orchestrates tool use, manages conversational memory through compaction, and contains critical reliability features like automatically repairing conversation transcripts to ensure compatibility with strict model APIs.

- Automated Conversation Transcript Repair — 【User Value】 Prevents conversations from failing due to technical errors. Some AI models are very strict and will reject a request if the conversation history isn't formatted perfectly, especially after complex tool use. This feature automatically 'cleans' the history before sending it, making the assistant much more reliable. 【Design Strategy】 Before sending the conversation history to the AI model, run a series of sanitization and repair steps to enforce the formatting rules required by the provider's API, such as ensuring tool calls and their results are correctly paired and ordered. 【Business Logic】 - Step 1: Remove incomplete tool calls. The system scans the assistant's previous messages. Any 'tool call' blocks that are malformed (e.g., missing the necessary arguments) are removed. If this leaves an assistant message empty, the entire message is deleted from the history. - Step 2: Remove orphaned tool results. The system ensures that every 'tool result' message appears directly after the assistant's message that called the tool. Any tool results found elsewhere are considered 'orphans' and are deleted. - Step 3: De-duplicate tool results. If the same tool result appears multiple times (identified by its unique ID), duplicates are removed to keep the history clean. - Step 4: Inject synthetic error results. If the assistant made a tool call but the corresponding result is missing from the history, the system inserts a fake 'error' result. This informs the model that the tool failed to run, preventing the model from getting stuck waiting for a response that never came. - Step 5: Skip repair on aborted runs. If the assistant's last turn was aborted or ended in an error, this entire repair process is skipped. This is a safety measure to avoid creating more errors by trying to fix an already broken, partial state.

- Dynamic Context Window Reservation — 【User Value】 Improves the reliability of long conversations by preventing 'context overflow' errors. It ensures there's always enough space left in the model's memory for it to think and perform summary tasks (compaction). 【Design Strategy】 Enforce a minimum 'reserve' of tokens in the context window. If the current setting is below this safe floor, dynamically override it for the current run. 【Business Logic】 - Step 1: Define a safety floor. A default minimum reserve of 20,000 tokens is established. This value is configurable by the user. - Step 2: Check current setting. Before executing a new agent run, the system checks the currently configured token reservation for compaction. - Step 3: Apply override if needed. If the current setting is below the 20,000 token floor, the system temporarily increases it to the floor value for the duration of the run. - Step 4: Report the override. The system logs that an override was applied, making the behavior transparent for debugging purposes.

- Safe Discovery of Local Models — 【User Value】 Allows users to easily use local AI models (via Ollama) out-of-the-box while preventing common stability issues associated with them. 【Design Strategy】 Automatically scan the local machine for Ollama models but apply a safe default configuration to them, specifically disabling a known problematic feature (streaming) to ensure reliability. 【Business Logic】 - Step 1: Scan for local models. The system sends a request to the local Ollama server's API endpoint to get a list of all installed models. This has a 5-second timeout to prevent the system from hanging if the Ollama server is unresponsive. - Step 2: Handle discovery failure. If the server doesn't respond or returns an empty list, the system logs a warning and continues without local models. - Step 3: Apply compatibility settings. For each discovered model, the system automatically sets a parameter to disable response streaming. A comment is added explaining this is a workaround for a known issue, guiding users if they wish to re-enable it. - Step 4: Heuristically flag capabilities. The system checks the model's name for keywords like 'reasoning' to guess if it's good at tool use, providing a hint in the UI.

Omnichannel Communication

This module connects OpenClaw to the outside world, allowing it to send and receive messages across a wide array of platforms like WhatsApp, Telegram, Slack, and LINE. It's designed as a plugin-based system where each channel is a self-contained connector. The core logic focuses on secure and user-friendly access control, handling everything from new user 'pairing' flows to group message policies and reliable media handling.

- Dynamic Channel Connector Management — 【User Value】 Provides a flexible way to add, remove, and manage connections to various chat platforms without restarting the entire system, and makes the setup process intuitive through a structured catalog. 【Design Strategy】 Treat each channel integration as a 'plugin' that can be discovered and loaded at runtime. Present these plugins in a browsable catalog with rich metadata for user-friendly configuration. 【Business Logic】 - Step 1: Discover channel plugins. The system scans predefined directories for channel connector plugins. - Step 2: Load external catalogs. It also looks for external JSON files that define additional, potentially private or enterprise-specific, channel plugins. It gracefully ignores missing or invalid files. - Step 3: Build a unified catalog. It merges all discovered plugins and catalog entries into a single, user-facing list. Each entry contains metadata like a user-friendly label, icon, documentation link, and installation details (e.g., the required 'npm' package name). - Step 4: Cache for performance. The loaded plugin information is cached in memory. The cache is automatically invalidated if the underlying plugin registry changes, ensuring changes are picked up while maintaining fast performance for repeated lookups.

- Secure DM & Group Access with User Pairing — 【User Value】 Protects the assistant from unauthorized access in both direct messages and group chats, while providing a simple, chat-based workflow for new users to request access. 【Design Strategy】 Implement a multi-layered access control policy for each channel that combines a static configuration with a dynamic, persisted allow-list. For unknown users, initiate a 'pairing' flow instead of silently ignoring them. 【Business Logic】 (Example using LINE channel) - Step 1: Define policies. An admin can set a 'DM Policy' (e.g., open, allowlist, pairing, disabled) and a 'Group Policy' (e.g., open, allowlist, disabled). The secure default for DMs is 'pairing'. - Step 2: Receive inbound message. When a new message arrives, the system checks if the sender is on the access list. - Step 3: Build effective allow-list. The access list is a combination of users defined in the static config file and users who have been previously approved and are stored in a persistent 'pairing' database. - Step 4: Handle unauthorized DM. If the sender is not on the list and the DM policy is 'pairing', the system doesn't just block the message. Instead, it generates a unique pairing code and sends a reply to the user with the code and instructions on how an admin can approve them. - Step 5: Block or allow. If the user is on the allow-list, the message is processed. If they are not, and the policy is not 'pairing', the message is blocked and logged. 【Tradeoff】 The pairing flow is more user-friendly than a silent block, but it adds an extra step for both the new user and the admin who must approve the request.

- Reliable Outbound Message & Media Delivery — 【User Value】 Ensures that the assistant's replies are delivered correctly and reliably, respecting the unique limitations of each chat platform (like message length) and preventing resource exhaustion from large file attachments. 【Design Strategy】 Use a shared outbound pipeline that incorporates channel-specific adapters for formatting, chunking, and sending, plus a universal, streaming-based media download guard. 【Business Logic】 (Example using LINE channel) - Step 1: Apply response prefix. The system can be configured to add a prefix (e.g., a specific emoji or bot name) to all replies on a given channel. - Step 2: Chunk long messages. Before sending, the shared pipeline uses a markdown-aware chunking function to split long text messages into smaller pieces that fit within the character limits of the target platform (e.g., LINE). - Step 3: Batch send chunks. A channel-specific function sends these chunks as a sequence of separate messages. - Step 4: Handle media bounds. When processing an inbound message with media (e.g., an image), the download function streams the file. It counts the bytes as they arrive and immediately aborts the download with an error if the total size exceeds a configured maximum (e.g., 25MB), preventing the server from being overwhelmed by excessively large files.

Extensibility & Customization

This module represents a core pillar of OpenClaw's value: its extensibility. It provides a comprehensive system for users and developers to add new capabilities through plugins, skills, and hooks. Plugins can introduce entirely new channels, AI model providers, or background services. Skills are reusable packages of commands and logic that the agent can learn. Hooks allow for deep customization by letting developers inject logic into the agent's core lifecycle.

- Structured Plugin Manifest & Registration — 【User Value】 Establishes a clear, standardized way for developers to create extensions, making the ecosystem robust and easy to manage. The manifest acts as a contract, declaring what the plugin does and how to configure it. 【Design Strategy】 Require every plugin to include a manifest file (`openclaw.plugin.json`) that defines its identity, capabilities, and configuration schema. Use this manifest to drive discovery, validation, and registration. 【Business Logic】 - Step 1: Manifest Declaration. A plugin developer creates a manifest file declaring a unique ID, name, version, and the capabilities it provides (e.g., which channels, tools, or AI providers it adds). - Step 2: Configuration Schema. The developer can include a JSON Schema within the manifest. This defines the specific configuration options the plugin needs, including data types and validation rules. This schema is also used to auto-generate a configuration UI. - Step 3: Registration Function. The plugin's code exports a `register` function. When the plugin is loaded, the system calls this function and passes it an API object. - Step 4: Capability Registration. Inside the `register` function, the developer uses the provided API to register its features, such as `api.registerChannel(...)`, `api.registerTool(...)`, or `api.registerHttpHandler(...)`. The system adds these to a central registry for runtime use.

- Multi-Faceted Plugin API Surface — 【User Value】 Provides developers with a rich and secure set of functions to integrate their extensions deeply into the OpenClaw core, from adding new chat commands to creating background services or custom web endpoints. 【Design Strategy】 Expose a single, comprehensive `OpenClawPluginApi` object to plugins during registration, offering a well-defined and version-stable interface for all supported extension points. 【Business Logic】 - Step 1: Register Tools. A plugin can add new tools that the AI agent can use by calling `api.registerTool()`. The tool gets access to a sandboxed context with workspace information and the session it's running in. - Step 2: Register Channels & Providers. Plugins can add support for new messaging platforms (`api.registerChannel()`) or new AI model providers (`api.registerProvider()`). - Step 3: Register Web and CLI extensions. A plugin can add custom web pages or API endpoints to the Gateway's web server (`api.registerHttpRoute()`) or add new commands to the `openclaw` command-line tool (`api.registerCli()`). - Step 4: Register Hooks & Services. For advanced use cases, plugins can register background services with `start` and `stop` lifecycle methods (`api.registerService()`) or register 'hook' functions that run at specific points in the agent's lifecycle (`api.registerHook()`). - Step 5: Access Configuration & Logging. The API provides secure access to the plugin's own validated configuration (`api.pluginConfig`) and a namespaced logger for clean logging.

- Lifecycle-Aware Plugin Loader — 【User Value】 Safely loads, validates, and manages all installed plugins, preventing conflicts and providing clear diagnostics if a plugin fails to load, which enhances system stability. 【Design Strategy】 Implement an orchestration layer that manages the entire lifecycle of plugins, from discovery and validation to registration and error handling, with caching for performance. 【Business Logic】 - Step 1: Discover Plugins. The loader scans configured directories (including the workspace and system folders) for potential plugins. - Step 2: Validate Manifests. It reads the manifest file for each plugin and validates its structure. - Step 3: Check if Enabled. It resolves whether a plugin should be loaded based on the user's main configuration file and auto-enable rules. - Step 4: Validate Configuration. For enabled plugins, it validates the user-provided configuration against the schema defined in the plugin's manifest. If validation fails, the plugin is disabled and an error is logged. - Step 5: Register Capabilities. If all checks pass, it calls the plugin's `register` function to add its capabilities to the system. - Step 6: Handle Conflicts and Errors. It catches errors during loading (e.g., syntax errors) and registration. If two plugins have the same ID, it loads the one with higher priority (e.g., workspace plugin overrides a bundled one) and disables the other, logging the conflict. - Step 7: Cache Results. The final state of the plugin registry (which plugins are loaded, disabled, or failed) is cached to speed up subsequent starts.

- Multi-Source Skills Ecosystem — 【User Value】 Empowers users to easily extend the assistant's abilities with 'skills' from various sources (built-in, community-managed, or personal), creating a marketplace-like experience for agent capabilities. 【Design Strategy】 Define a standard format for skills using a `SKILL.md` file with structured metadata. Discover and load these skills from bundled, workspace, and plugin directories, and provide CLI tools to manage their installation and status. 【Business Logic】 - Step 1: Skill Definition. A skill is defined by a `SKILL.md` file containing YAML metadata. This metadata includes its name, description, and an `openclaw` section that specifies requirements (e.g., required command-line tools) and installation instructions (e.g., a `brew install` formula). - Step 2: Discover Skills. The system discovers skills from three locations: bundled skills that ship with OpenClaw, user-created skills in the `~/.openclaw/skills/` directory, and skills that are included within a plugin. - Step 3: Manage via CLI. The user can interact with skills via the command line, for example: `openclaw skills list` to see all available skills, `openclaw skills install <skill>` to run the installation steps, and `openclaw skills status <skill>` to check if its requirements are met on the current system. - Step 4: Skill Invocation. The `SKILL.md` file can also define trigger phrases. When the user says a trigger phrase in a chat, the system parses the command and executes the associated skill.

User Control Interfaces

This module covers the primary ways a user interacts with and manages their OpenClaw assistant. It includes the web-based Control UI for operational management, the native companion apps that turn devices into powerful 'nodes' for the agent, and the command-line interface for setup and administration. The design philosophy is to provide multiple interfaces tailored to different tasks, from a visual dashboard for monitoring to deep hardware integration via native apps.

- Control UI WebSocket Lifecycle & Authentication — 【User Value】 Provides a secure, real-time web dashboard for managing the assistant. It uses device-specific credentials when possible for enhanced security, ensuring that only the user's trusted browser can control the gateway. 【Design Strategy】 The UI connects to the Gateway via a WebSocket. It implements a robust lifecycle management system with connection handshakes, automatic reconnection, and a secure authentication flow that prefers cryptographic device identity over simple tokens. 【Business Logic】 - Step 1: Initiate Connection. The UI opens a WebSocket connection to the configured Gateway URL. - Step 2: Perform Handshake. After connecting, the UI sends a 'connect' request. If running in a secure browser context, it generates a cryptographic device identity and uses it to sign the request. This proves the request is coming from a specific, previously-paired browser instance. - Step 3: Handle Authentication Response. If the Gateway recognizes the device identity, it replies with a temporary device token that is stored securely by the browser for subsequent reconnects. - Step 4: Fallback to Token/Password. If not in a secure context or if device auth fails, the UI falls back to using the user-provided token or password. - Step 5: Manage Reconnection. If the connection drops, the UI automatically tries to reconnect using an exponential backoff strategy (e.g., wait 0.8s, then 1.4s, then 2.3s, up to a max of 15 seconds) to avoid overwhelming the server.

- Control UI RPC and Event Handling — 【User Value】 Creates a responsive and reliable user experience in the web UI by structuring communication as a series of commands and events, and by detecting if any real-time updates were missed. 【Design Strategy】 Implement a custom JSON-RPC-like protocol over the WebSocket. Each request is tagged with a unique ID for response correlation. The server pushes asynchronous events with sequence numbers to detect gaps. 【Business Logic】 - Step 1: Send Request. To perform an action (e.g., send a chat message), the UI sends a JSON frame like `{type: 'req', id: 'uuid-123', method: 'chat.send', ...}`. It stores the request's promise in a pending map. - Step 2: Correlate Response. When the Gateway replies with `{type: 'res', id: 'uuid-123', ...}`, the UI looks up 'uuid-123' in its pending map and resolves the promise with the response payload, updating the UI. - Step 3: Handle Asynchronous Events. The Gateway can push events at any time (e.g., an incoming message). These frames look like `{type: 'event', event: 'message.new', seq: 101, ...}`. - Step 4: Detect Missed Events. The UI keeps track of the last received sequence number (`seq`). If it receives an event with `seq: 103` when the last one was `101`, it knows it missed event `102` and displays a 'Refresh recommended' error to the user, prompting them to reload to get a consistent state.

- Device-as-a-Node Discovery — 【User Value】 Allows companion apps (on iOS, Android, macOS) to automatically find and connect to the user's OpenClaw Gateway on the network without any manual IP address configuration, creating a seamless 'it just works' experience. 【Design Strategy】 Use multiple network discovery protocols to locate gateways. Prioritize local, zero-configuration discovery, but also support discovery across more complex network topologies (like VPNs) via DNS. 【Business Logic】 - Step 1: Local Network Scan (Bonjour). Upon launch, the companion app immediately starts a Bonjour/NSD scan on the local network for services of type `_openclaw-gw._tcp.`. This is a standard zero-configuration protocol. - Step 2: Wide-Area Network Scan (DNS). If a special environment variable (`OPENCLAW_WIDE_AREA_DOMAIN`) is configured, the app also starts periodically polling DNS SRV records for that domain. This allows discovery of gateways running on remote servers or within a private Tailscale network. - Step 3: Extract Metadata. From the discovered services, the app extracts crucial connection information like the hostname, port, and a TLS certificate fingerprint for security. - Step 4: Present to User. All discovered gateways, both local and remote, are collected and displayed in a single list for the user to select from.

- Device Hardware Capability Protocol — 【User Value】 Empowers the AI assistant to perform actions in the real world by giving it control over the user's device hardware, such as the camera, microphone, and location services. 【Design Strategy】 The companion app acts as a 'node' or hardware proxy for the Gateway. It exposes device capabilities to the agent via a structured command protocol over the WebSocket connection. 【Business Logic】 - Step 1: Agent Decides to Use a Tool. The agent, running on the Gateway, decides to use a device-specific tool, for example, `camera.snap` to take a picture. - Step 2: Command Sent to Node. The Gateway sends a JSON command `{ 'method': 'camera.snap', ... }` over the WebSocket to the connected companion app (the 'node'). - Step 3: Node Executes the Command. The app receives the command, parses the method name ('camera.snap'), and dispatches it to the native controller responsible for the camera. - Step 4: Native API Invocation. The `CameraCaptureManager` in the app invokes the device's native camera APIs to take a photo. - Step 5: Result Returned to Agent. The captured image is encoded (e.g., as Base64) and sent back to the Gateway as the result of the tool call. The agent can then use this image in its response.

Core Technical Capabilities

Unified WebSocket Control Plane Architecture

Problem: How to create a single, real-time communication hub for a diverse set of clients (CLI, web UI, mobile apps, remote nodes) to interact with the AI agent and system services in a structured and secure way?

Solution: The system is built around a centralized Gateway that exposes a single WebSocket endpoint. All interactions are modeled as JSON frames over this connection. - Step 1: Protocol Definition. A typed protocol is defined using schemas (TypeBox/JSON Schema) for all possible messages: requests, responses, and asynchronous events. - Step 2: Connection Handshake. Every client, upon connecting, must perform a versioned 'hello' handshake, declaring its identity (e.g., 'macOS companion app', 'Control UI'). The server validates this handshake. - Step 3: RPC-like Interaction. Clients send 'request' frames with a unique ID and method name (e.g., `sessions.send`). The Gateway routes this to the appropriate handler and replies with a 'response' frame that includes the same unique ID, allowing the client to correlate the reply to its request. - Step 4: Event Broadcasting. The Gateway can push asynchronous 'event' frames to all connected clients (e.g., a new message arrives, a tool needs approval). This allows UIs and other clients to have a real-time view of the system state. - Key Value: This architecture decouples all clients from the core logic, allowing new clients (e.g., a new companion app for a different OS) to be added without changing the central Gateway. It provides a single, secure entry point for all system control.

Technologies: WebSocket, JSON-RPC, AJV, TypeBox

Boundaries & Risks: As a single point of communication, the WebSocket server's availability is critical. The custom RPC-like layer does not have built-in request timeouts on the client-side, requiring individual clients to implement their own logic to handle unresponsive requests. All clients must conform to the same protocol version to ensure compatibility.

Device-as-a-Node Hardware Proxy

Problem: How can a server-based AI assistant gain access to and control hardware-specific functions (camera, location, microphone, local command execution) on a user's personal devices (phone, laptop) securely and seamlessly?

Solution: Instead of being simple clients, the companion apps (iOS/Android/macOS) function as 'nodes' in the OpenClaw network. They act as proxies, exposing device hardware as tools the central agent can call. - Step 1: Discovery. Nodes use zero-configuration networking (Bonjour/mDNS) to discover the Gateway on the local network automatically. They can also use DNS-based service discovery for connecting to remote or VPN-hosted Gateways. - Step 2: Capability Advertisement. When a node connects to the Gateway, it advertises the list of capabilities it supports (e.g., `camera.snap`, `location.get`, `system.run`). - Step 3: Remote Procedure Call. When the agent needs to use a device-specific capability, the Gateway sends a `node.invoke` command over the WebSocket to the appropriate node. The command specifies the tool to run and its arguments. - Step 4: Native Execution. The node receives the command and translates it into a call to the native OS API (e.g., Android's CameraX or macOS's `Process` for running shell commands). - Step 5: Result Marshaling. The result of the native call (e.g., image data, command output) is packaged and sent back to the Gateway as the result of the tool call. - Key Value: This architecture turns every personal device into a potential peripheral for the AI, massively expanding its ability to interact with the real world without requiring the core agent logic to run on the device itself.

Technologies: WebSocket, Bonjour/mDNS, DNS-SD, Native Mobile/Desktop APIs

Boundaries & Risks: This model requires a persistent, low-latency connection between the Gateway and the node. The security of device-local actions (especially `system.run`) is critical and relies on the user granting appropriate permissions to the companion app. The set of available tools depends on which nodes are currently connected.

Dynamic Plugin Extension System

Problem: How to create a platform that can be easily extended by a community of developers with new features (channels, AI models, tools) without requiring changes to the core codebase and without compromising stability?

Solution: The system is designed around a powerful plugin architecture that allows third-party code to be discovered, validated, and integrated at runtime. - Step 1: Standardized Manifest. Each plugin must contain an `openclaw.plugin.json` file. This manifest declares its dependencies, configuration schema, and the capabilities it provides. - Step 2: Dynamic Loading. At startup, the Gateway scans for plugins. It uses a dynamic module loader (Jiti) to load the plugin's code in a safe way. This allows plugins to be written in modern JavaScript/TypeScript. - Step 3: Unified API Surface. A loaded plugin is given an `API` object. This object provides a set of stable `register` functions (e.g., `registerChannel`, `registerTool`, `registerHook`). This is the sole entry point for a plugin to interact with the core system. - Step 4: Centralized Registry. When a plugin calls a `register` function, its capability is added to a central registry. For example, a new channel is added to the channel registry. The rest of the system interacts with these registries, not directly with the plugins. - Key Value: This design allows for a clean separation of concerns. The core team can focus on the platform, while the community can build a rich ecosystem of extensions. It's similar to the extension model of VS Code or Chrome.

Technologies: Dynamic Module Loading (Jiti), JSON Schema, Adapter Pattern, Manifest-driven discovery

Boundaries & Risks: Plugins are implicitly trusted and run in the same process as the Gateway, so a poorly written plugin can impact the stability of the entire system. There is no version constraint system, so an old plugin might break with a new version of OpenClaw. Asynchronous initialization in the main `register` function is not supported, requiring more complex patterns for plugins that need async setup.

SSRF Protection via DNS Pinning

Problem: How to allow the assistant to make HTTP requests to the internet (e.g., for its browser tool) while preventing a malicious user from tricking it into attacking internal network services (a Server-Side Request Forgery or SSRF attack)?

Solution: A custom networking layer is implemented to intercept and validate all outbound HTTP requests made by the agent. - Step 1: Block known private ranges. A hardcoded blocklist of private IP address ranges (e.g., 10.0.0.0/8, 127.0.0.0/8, 192.168.0.0/16) and special hostnames (`localhost`, `metadata.google.internal`) is maintained. - Step 2: Pre-resolve hostname. Before making the actual HTTP request, the system performs a DNS lookup on the target hostname. - Step 3: Validate resolved IPs. The system inspects every IP address returned by the DNS lookup. If any of them fall within the private/blocked ranges, the entire request is rejected before it is sent. - Step 4: Pin the address. The HTTP client is then configured to connect *only* to the validated, public IP address that was resolved in the previous step, ignoring any subsequent changes in DNS. This is 'DNS Pinning'. - Key Value: This provides a strong defense against common SSRF attacks, including those that use DNS rebinding, making it much safer for the agent to have internet access.

Technologies: DNS Resolution, CIDR blocklists, HTTP Client Middleware

Boundaries & Risks: This protection can be bypassed if an attacker controls the DNS and can time a DNS record change between the validation and the actual request (DNS rebinding). The protection relies on an accurate and up-to-date list of private IP ranges. It may also interfere with legitimate use cases that require connecting to local services.

User-Friendly Port Conflict Resolution

Problem: When a user tries to start the application, it often fails with an obscure 'EADDRINUSE' error if another process is already using the required network port. How can we make this common problem easy for a non-expert to diagnose and fix?

Solution: Instead of just crashing, the startup sequence includes a dedicated error handling path for port conflicts. - Step 1: Probe the port. Before starting the main server, the application attempts a quick, ephemeral connection to the target port. - Step 2: Catch the specific error. If the connection fails with the `EADDRINUSE` error code, the special handling is triggered. - Step 3: Run diagnostics. The system executes a platform-specific command (like `lsof` on Linux/macOS) to identify exactly which process is currently listening on that port, getting its name and process ID (PID). - Step 4: Provide an actionable error message. The application then displays a clear, human-readable message to the user, such as: 'Error: Port 8080 is already in use by process 'Spotify' (PID: 12345). Please stop that process or choose a different port using the --port flag.' - Step 5: Self-detection. The diagnostic logic is even smart enough to detect if the conflicting process is another instance of OpenClaw itself, leading to an even more specific suggestion. - Key Value: This transforms a frustrating, technical roadblock into a simple, guided troubleshooting step, significantly improving the user onboarding experience.

Technologies: Node.js `net` module, System command integration (`lsof`)

Boundaries & Risks: The diagnostic feature depends on system-level tools like `lsof` being installed and accessible. On highly locked-down systems or unusual operating systems, the diagnostic command may fail, in which case it would fall back to a more generic error message.

Technical Assessment

Business Viability — 2/10 (Community Driven)

Strong open-source product direction with meaningful engineering depth, but commercial maturity and support model are not evidenced.

OpenClaw is positioned as a self-hosted personal AI assistant that integrates deeply with consumer messaging channels and personal devices, which is a clear user need for privacy-oriented and always-on assistants (README). The project shows signs of serious engineering investment (multi-platform apps, extensive docs, and a broad extension ecosystem), but there is no clear commercial packaging, pricing, or enterprise support commitments evidenced in the provided materials. The dependency on third-party model subscriptions (Anthropic/OpenAI OAuth) means end users still bear ongoing AI costs, which can limit mass adoption but fits a prosumer niche. Overall, it looks viable as an open-source ecosystem project, with commercial viability unproven based on the evidence provided here.

Recommendation: If you are adopting it internally, treat it as a power-user platform for a small group first and validate operational security (DM policies, gateway exposure) before broader rollout. If you are considering investment/partnership, focus diligence on maintainership continuity, release cadence, and whether a paid offering or support model is planned (not evidenced here). If you want to build on it commercially, budget for productizing upgrades: plugin compatibility guarantees, observability, and hardened security defaults for non-expert operators.

Technical Maturity — 3/10 (Creative Approach)

Technically sophisticated and platform-shaped, but not yet hardened to enterprise-grade operational and security expectations.

The project demonstrates a thoughtfully designed local-first control plane with schema-validated protocol frames, multiple auth modes (including tail-network identity), origin allowlisting for browser access, and configuration-driven security auditing (src/gateway/protocol/*, src/gateway/auth.ts, src/gateway/origin-check.ts, src/security/audit*.ts). The agent runtime includes non-trivial reliability work such as transcript repair for tool-call/result ordering and streamed run coordination with lifecycle and compaction events (src/agents/session-transcript-repair.ts, src/agents/pi-embedded-subscribe.handlers.lifecycle.ts). The ecosystem breadth (plugins, skills, hooks, multi-channel connectors, and companion apps with hardware capabilities) indicates a platform mindset rather than a single script (src/plugins/*, src/channels/*, apps/*). However, several operational and security hardening gaps are evident (for example, SSRF rebinding concerns, weak plugin compatibility guarantees, and some client-side reliability gaps), suggesting it is not yet “production-grade” by enterprise standards.

Recommendation: Use it where local-first personal automation is the priority and where a small team can own configuration and security posture. Avoid treating it as an enterprise multi-tenant assistant platform without additional hardening and governance (plugin versioning, auditing, security review of outbound web tooling). If you need regulated deployments, require a formal threat model review of remote exposure modes, proxy trust configuration, and outbound web access controls.

Adoption Readiness — 3/10 (Ready with Effort)

Deployable and well-documented, but operators will need real effort to manage configuration, security, and extension compatibility.

Adoption is helped by a wizard-led onboarding flow and strong documentation emphasis (README; extensive docs tree), plus operational utilities such as port conflict diagnostics, runtime guards, and structured logging (src/infra/ports.ts, src/infra/runtime-guard.ts, src/logging/logger.ts). At the same time, it is a multi-surface system (gateway + UI + channels + optional companion apps) with meaningful configuration and security decisions (DM allowlists, pairing, exposure modes), which increases operational burden. The browser Control UI client does not implement per-request timeouts and uses deterministic reconnect behavior without jitter, which can create UI “hang” scenarios and recovery load under outages (ui/src/ui/gateway.ts). Plugin lifecycle and compatibility constraints (no version constraints, no hot reload, limited update ergonomics) mean extensions may require ongoing engineering involvement (src/plugins/loader.ts, src/plugins/manifest-registry.ts, src/cli/plugins-cli.ts).

Recommendation: Plan adoption as a managed platform: define a standard configuration baseline, run the built-in security audit routinely, and lock down remote access patterns (especially any proxy or tail-network modes). For organizations, standardize a supported plugin set and create an internal compatibility policy (pin versions, test before upgrades). Treat companion apps as optional accelerators; start with gateway + one or two channels to reduce integration complexity.

Operating Economics — 3/10 (Balanced)

Infrastructure costs are low by design, but AI model usage and operational troubleshooting effort will dominate total cost at scale.

The local-first design can reduce centralized infrastructure costs because the gateway runs on the user’s own devices rather than requiring a hosted backend (README positioning; gateway daemon model). The primary variable cost driver is model usage, which is paid via third-party subscriptions or API usage depending on the chosen provider (README mentions OAuth subscriptions), meaning costs scale with usage rather than with OpenClaw infrastructure. Operationally, always-on capabilities (voice wake, device nodes, multi-channel monitoring) can increase end-user device resource usage and support burden, especially when multiple connectors are enabled. Some operational choices (for example, cron jobs retrying indefinitely, and log transport errors being swallowed) can create “silent cost” through wasted cycles and harder troubleshooting (src/cron/service/timer.ts, src/logging/logger.ts).

Recommendation: Define a default model strategy and failover policy to control cost escalation (for example, reserve premium models for specific skills or channels). Set operational guardrails: disable or cap failing scheduled jobs, and ensure logs are reliably shipped/retained so troubleshooting does not become labor-expensive. For larger teams, invest in centralized observability (for example, a dedicated external logging transport with alerting) rather than relying on local log files alone.

Architecture Overview

- Local Gateway Daemon (Control Plane)

- A Node.js (Node 22+) always-on local daemon that acts as the control plane for sessions, channels, tools, cron jobs, and operator actions. It exposes a WebSocket-based RPC protocol with schema validation and multiple authentication options designed to stay local-first while supporting safe remote access patterns (for example, tail-network identity).

- Operator Surfaces (CLI + Browser Control UI)

- A command-line wizard and operational commands drive setup and diagnostics, while a browser-based Control UI maintains a persistent WebSocket connection for configuration, chat/session operations, logs, and approvals. The browser client implements reconnect/backoff and request-response correlation over the same control-plane protocol.

- Agent Runtime (Conversation → Tools → Replies)

- An embedded agent runtime turns each incoming turn into a streamed run, coordinates tool calls, emits lifecycle and compaction events, and performs transcript repair to satisfy strict model-provider API constraints. This layer is focused on reliability under real-world tool-calling and long-context conversations.

- Messaging Channels and Routing

- A multi-channel messaging layer (Discord, Slack, Telegram, WhatsApp, and others via core and extensions) normalizes inbound/outbound messaging and applies access controls such as allowlists and pairing flows. It integrates shared reply dispatch (including chunking) and channel-specific constraints (for example, media size caps).

- Extensibility (Plugins, Skills, Hooks)

- A plugin system loads extensions via manifests, validates configuration with schemas, and lets plugins register tools, channels, model providers, gateway methods, HTTP routes, CLI commands, background services, and hooks. A skills system adds reusable capability bundles with eligibility checks and installation guidance, enabling customization without forking core code.

- Companion Apps (Mobile/Desktop Hardware Proxies)

- Native companion apps (Android, macOS, iOS) discover gateways on LAN and wide-area networks and connect via WebSocket IPC, with support for TLS pinning. They expose device capabilities (voice wake, camera, screen capture, SMS, location, and a visual canvas) to the assistant through a structured command protocol.

Key Strengths

One assistant that works where users already communicate

A single personal assistant experience across many messaging channels is a high-maintenance capability that most competitors avoid.

User Benefit: Users can interact with a single personal assistant across many existing messaging surfaces rather than switching apps or rebuilding workflows per platform. This makes adoption more natural because the assistant meets the user in their daily channels and can respond back through those same channels.

Competitive Moat: Achieving broad, reliable multi-channel coverage is time-consuming because every messaging platform has different APIs, message formats, limits, and authentication models. It also requires ongoing maintenance as platforms change, making it harder to replicate quickly than a single-channel assistant.

Local-first control plane that keeps the assistant “always on” without a hosted backend

A real local platform (not just an app) that can run continuously and coordinate multiple clients and channels.

User Benefit: The assistant can run on the user’s own machines as a persistent service, improving responsiveness and reducing dependence on a vendor-controlled cloud. This also supports a privacy-oriented posture because control and session management live locally.

Competitive Moat: Building a local daemon that safely coordinates sessions, tools, channels, and UI clients requires a coherent protocol, authentication strategy, and operational tooling. The combination of schema-validated protocol evolution, multiple auth modes, and security auditing reflects substantial platform engineering (src/gateway/protocol/*, src/gateway/auth.ts, src/security/audit*.ts).

Device-bound operator access for safer local administration

More secure operator access patterns than typical local dashboards, reducing the blast radius of leaked shared tokens.

User Benefit: Operators can connect to the gateway from the browser with stronger device identity in secure contexts, reducing reliance on reusable shared secrets. This supports safer day-to-day administration from the Control UI when compared to “paste a token into a web page” approaches (ui/src/ui/gateway.ts).

Competitive Moat: Device identity and token storage flows across reconnects are subtle to implement correctly, especially when combined with protocol versioning and fallback behaviors. The system integrates client identity into the connect handshake and stores device tokens for future sessions (ui/src/ui/gateway.ts; src/gateway/server/ws-connection/message-handler.ts).

Companion apps turn personal devices into assistant tools (voice, camera, screen, and more)

A true “assistant on your devices” approach, not just a chat interface.

User Benefit: Users can give the assistant real-world capabilities through their own devices (wake-word listening, camera snapshots, screen recording, location, SMS), enabling workflows that go beyond chat. This makes the assistant feel integrated into daily life rather than confined to text conversations (apps/android/*; apps/ios/*; apps/macos/*).

Competitive Moat: Cross-platform native development plus a stable IPC protocol is a significant investment, especially when combined with discovery, reconnect behavior, and security measures like TLS pinning (apps/android/.../GatewayDiscovery.kt; apps/android/.../GatewaySession.kt; apps/shared/OpenClawKit/.../GatewayTLSPinning.swift).

Interactive visual canvas controlled by the assistant for richer workflows

A visual workspace layer that materially expands what the assistant can do beyond text.

User Benefit: A live visual surface can display and update rich information (dashboards, interactive controls, context panels) that is difficult to express in messaging-only interactions. This supports higher-complexity personal workflows where the assistant can present and manipulate a working space, not just send messages.

Competitive Moat: Keeping an agent-driven visual UI consistent across builds and devices requires both a packaging pipeline and runtime hosting integration (scripts/bundle-a2ui.sh; src/canvas-host/*; companion app canvas controllers). This is a multi-component capability that is harder to reproduce than standard chat UI.

Extension ecosystem that lets third parties add channels, providers, and tools without forking core

A platform-style extension model, enabling an ecosystem instead of a closed assistant.

User Benefit: Teams can tailor the assistant to their environment by adding connectors, tools, or model providers as plugins, reducing vendor lock-in and avoiding deep modifications to core. This is important for long-lived deployments where needs evolve over time (src/plugins/registry.ts; src/plugins/loader.ts).

Competitive Moat: The plugin API covers multiple integration points (tools, channels, model providers, gateway methods, HTTP routes, CLI commands, services, hooks) with schema validation and diagnostics. While plugin systems exist in the market, the breadth of registrations and the tight integration with an assistant runtime is non-trivial to replicate (src/plugins/types.ts; src/plugins/registry.ts).

Reliability layer that repairs tool-call history so models do not reject real conversations

Practical reliability engineering that prevents avoidable model API failures in tool-heavy sessions.

User Benefit: Users get fewer “the model refused because the history was malformed” failures, especially in complex runs involving tools and partial errors. This directly improves perceived reliability and reduces support burden when operating across multiple model providers with strict validation rules (src/agents/session-transcript-repair.ts).

Competitive Moat: Transcript repair for tool-call/result pairing is specialized glue work that requires deep understanding of provider constraints and real-world failure modes. It is not flashy, but it is exactly the type of engineering that distinguishes a demo from a resilient product.

Streaming replies with safeguards against duplicate messages across tools and channels

A smoother, less noisy user experience during streamed runs and tool usage.

User Benefit: Users see responsive partial replies without getting spammed by repeated content when tools also send messages. This is important in multi-channel settings where duplicate messages are particularly annoying and can look like the assistant is malfunctioning (src/agents/pi-embedded-subscribe.ts).

Competitive Moat: Coordinating streaming output with tool side effects requires careful bookkeeping and edge-case handling. The design includes bounded dedup memory and per-message fingerprints, which are details competitors often underestimate until real users complain (src/agents/pi-embedded-subscribe.ts).

Risks

Outbound web access may be exploitable via DNS rebinding, enabling internal network reach (Commercial Blocker)

The SSRF protection uses DNS pinning based on resolution at request time, but the provided analysis indicates a possible bypass via DNS rebinding when subsequent resolutions change to private addresses. This creates a risk where a hostname that initially resolves to a public IP later resolves to a private IP, potentially allowing unintended access to internal services (src/infra/net/ssrf.ts).

Business Impact: If an attacker can influence URLs the assistant fetches (for example via prompt injection or user-supplied links), the assistant could be tricked into reaching internal services on the user’s network. That can lead to data exposure or lateral movement, which is unacceptable for many commercial or regulated deployments.

Gateway access controls may be inconsistently enforced due to an evidence gap in server wiring (Notable)

Evidence confirms authentication utilities and origin checks exist, but the server startup and the exact enforcement points for all control-plane entry paths were not verified in the provided evidence set. This is a due diligence gap: it is unclear whether every WebSocket and HTTP route consistently applies the intended checks (src/gateway/auth.ts; src/gateway/origin-check.ts; referenced tests in src/gateway/server.*.e2e.test.ts).

Business Impact: Decision makers cannot confidently assess whether the gateway is safe under all exposure modes. This increases adoption risk, because a single unguarded path could undermine an otherwise strong security design.

Misconfigured proxy trust can break remote security assumptions or lock out legitimate users (Notable)

Local-direct request detection and forwarded-header handling depend on correct trusted-proxy assumptions. If a proxy is incorrectly trusted (or not trusted when it should be), requests may be misclassified with respect to “local vs forwarded” logic, impacting Tailscale identity verification and other auth expectations (src/gateway/auth.ts).

Business Impact: In the best case, this causes operational outages where admins cannot connect. In the worst case, it can weaken the intended security model around what counts as a local request, increasing the chance of accidental exposure.

Browser administration may fall back to reusable shared secrets in insecure contexts (Notable)

The browser Control UI uses device identity only in secure contexts; otherwise it falls back to token/password authentication. This increases reliance on reusable secrets when operators connect via non-secure contexts, which is explicitly a weaker posture than device-bound credentials (ui/src/ui/gateway.ts).

Business Impact: If an operator uses the Control UI in an insecure setup, leaked tokens become a practical risk. This can lead to unauthorized control of the assistant, including triggering tools or reading sensitive session content.

Long-lived self-signed certificates increase the blast radius of key compromise (Notable)

Self-signed TLS certificates are generated with a long validity period (10 years) and there is no evidenced automated rotation mechanism. This increases exposure if a private key is compromised, because it remains usable for a long time (src/infra/tls/gateway.ts).

Business Impact: Organizations and security-conscious users will view long-lived keys as a governance and incident-response problem. It can block adoption in environments that require routine key rotation and short certificate lifetimes.

Plugin compatibility failures can cause unpredictable downtime after upgrades (Notable)

Plugins declare versions, but there is no enforced compatibility checking between plugin and core platform versions. This can allow incompatible plugins to load and fail at runtime, with unclear failure modes (src/plugins/manifest-registry.ts; src/plugins/loader.ts).

Business Impact: Teams may avoid upgrading due to fear of breaking critical integrations, or they may experience outages after upgrades. This undermines long-term maintainability and raises total cost of ownership for any serious deployment.

Plugin registration cannot perform async initialization, limiting real-world integrations (Notable)

If a plugin registration routine returns a Promise, the loader warns but does not await it, effectively disallowing asynchronous initialization during registration. This forces plugin authors into less intuitive patterns or separate service start lifecycles (src/plugins/loader.ts).

Business Impact: Some integrations (databases, remote configuration, credential fetching) become harder to implement cleanly, which slows ecosystem growth and increases maintenance burden for plugin authors.

Plugin ID collisions can silently disable expected functionality (Notable)

When multiple plugins share the same identifier, later plugins are disabled with an override message based on priority rules. The evidence indicates this can be easy to miss unless operators inspect deep status output (src/plugins/loader.ts).

Business Impact: Operators may believe a plugin is active when it is not, causing missing channels/tools and extended troubleshooting time. This can be especially costly in environments that rely on multiple extensions.

Plugins lack a built-in update workflow, increasing operational maintenance cost (Notable)

There is evidence of plugin installation support, but no evidenced built-in mechanism for updating plugins. Users must manage updates manually (src/cli/plugins-cli.ts).

Business Impact: Manual updates increase security risk (outdated dependencies) and reduce adoption in organizations that require predictable patching processes.

Plugin changes require restarts, disrupting active sessions (Notable)

Plugin loading occurs at startup with caching; changes to plugins or plugin configuration require a restart to take effect (src/plugins/loader.ts).

Business Impact: This creates avoidable downtime and makes rapid iteration difficult. For users relying on an always-on assistant, restarts degrade the “always available” promise.

External plugin catalog errors can be silently ignored, hiding deployment mistakes (Notable)

External channel catalog JSON parsing is fail-open and invalid files may be ignored without clear operator-visible errors. This can hide configuration mistakes (src/channels/plugins/catalog.ts).

Business Impact: Operators may spend significant time debugging why certain channels do not appear or behave as expected, increasing support costs and reducing trust in the platform.

Connector loading may become stale if the plugin registry changes without replacement (Notable)

Channel plugin loading caches by registry object identity, clearing the cache only if the registry reference changes. If the registry mutates in place, cached connector resolution can become stale (src/channels/plugins/load.ts).

Business Impact: Operators may see inconsistent behavior during plugin changes or dynamic enable/disable scenarios, leading to confusion and potentially missed messages.

Channel policy behavior may diverge across connectors, increasing governance and support burden (Notable)

Evidence suggests some channels use a shared normalization/policy pipeline while others (example: LINE) have separate, channel-specific implementations. This can cause policy enforcement and behavior differences across channels (src/channels/plugins/normalize/*; src/line/*).

Business Impact: In multi-channel deployments, inconsistent access rules and message handling lead to user confusion, uneven security posture, and higher support overhead.

UI requests can hang indefinitely due to missing client-side timeouts (Notable)

The Control UI keeps a map of pending requests and clears it on response or socket close, but it does not implement per-request timeouts. A request that never receives a response can remain pending until the connection closes (ui/src/ui/gateway.ts).

Business Impact: Operators may experience a frozen or confusing UI during partial failures, increasing operational risk and making incident response slower.

Many clients may reconnect simultaneously after an outage, slowing recovery (Notable)

The Control UI reconnect logic uses backoff but does not apply randomized jitter, increasing the chance of synchronized reconnect storms after gateway restarts (ui/src/ui/gateway.ts).

Business Impact: In environments with multiple operator clients, the gateway can be overloaded during recovery, prolonging downtime and degrading user experience.